The Human as Conductor

My health insurance changed three times in one year. Drowning in life admin, I started tinkering with AI tools. One experiment led to the next. Would-be chores became… fun.

After selling our health tech company, HumanFirst, my COBRA insurance changed three times in one year as I moved back to the US from Europe. Normally, this would result in me spending a lot of time on the phone with my insurer, staring at EOBs, and trying to figure out what I owed and what they owed me. Instead, I started setting up new AI-driven workflows to handle every day tasks.

Although ChatGPT by OpenAI might have more brand recognition for the public, Claude (in its various forms) by Anthropic has been the clear winner for me. I have a new sense that I am a ‘conductor’ in my life rather than the do-er. My version of Claude and I co-developed this analogy:

A conductor doesn’t play every instrument. They hold the score, understand how the parts fit together, and create the conditions for each player to do their best work. The trumpet player is still the best trumpet player in the world. The conductor just knows when to call on them, and makes sure they have what they need.

That’s the shift I’ve been making with my own healthcare. I’m not replacing my doctors. Rather, I’m arriving with context already assembled. My labs are consolidated and reviewed, historical patterns flagged, and questions drafted for when we meet. My clinician can’t always do their best work when half of the relevant information lives in a portal they can’t access, a conversation with a specialist they’ve never met, or a family history I couldn’t remember off the top of my head. I couldn’t access my data easily either. Electronic Health Record (EHR) systems were built for providers and billing, and clinical data is an afterthought. Everyone has been working from an incomplete picture.

AI changes that for me, but not by directly putting “in charge” either the patient or the doctor. Using AI doesn’t cause a power flip — at least, not yet. Rather, it enables a third way: patient and doctor seeing each other more clearly, because the context that used to get lost in the gaps can now be surfaced. I show up with my picture assembled. My doctor confirms, challenges, builds on it. We both do better work than either of us could alone.

That’s what these experiments taught me.

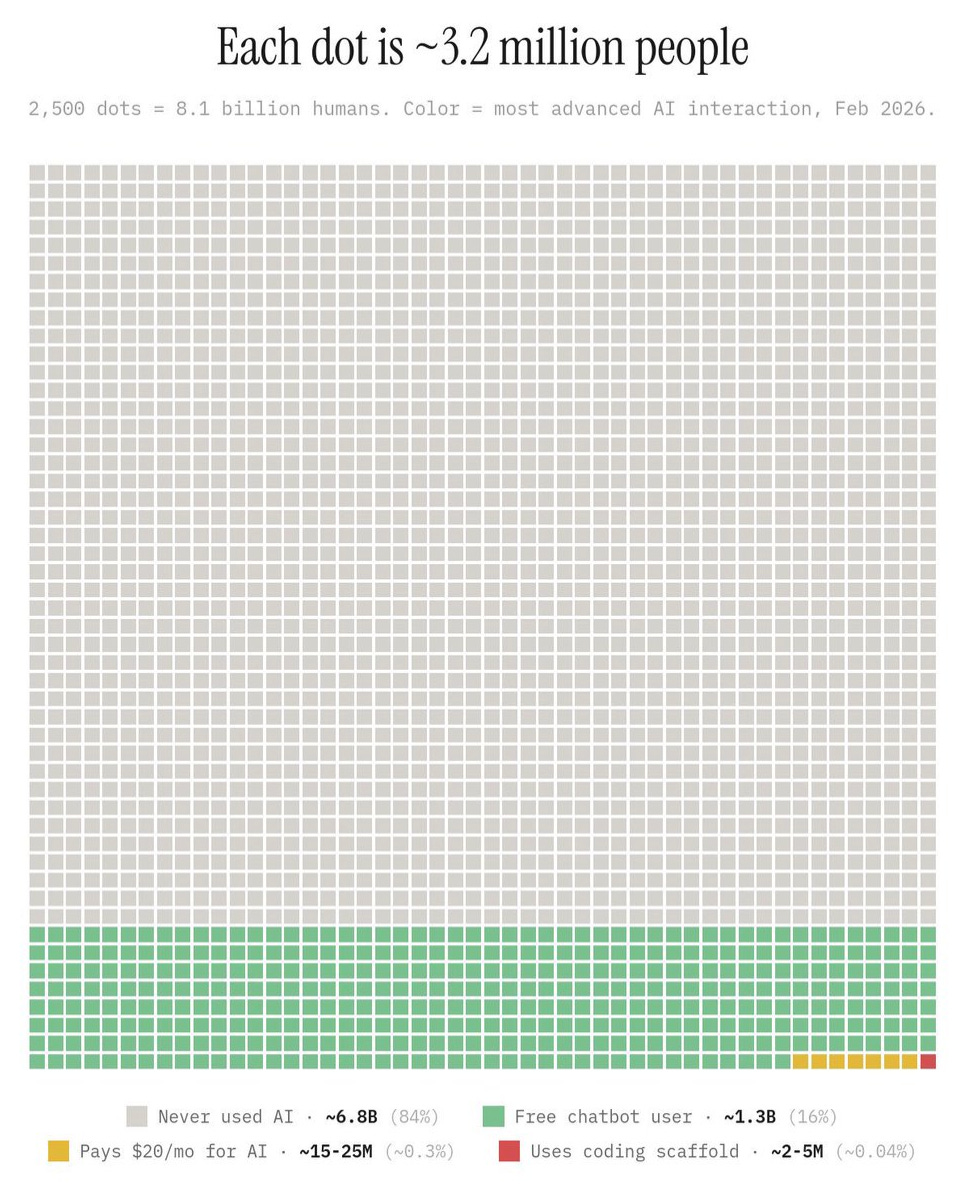

With AI, I feel like I have a jetpack… and it’s easier to set up than you might think. If you’re feeling behind or too late, this graphic by @damianplayer is for you:

The tech has changed dramatically in the past few months, and it means that everyone can be ‘technical’ now. Capabilities that used to be only available to engineers now are available through normal language. The main requirements to participate are curiosity and a chat interface.

The Experiments

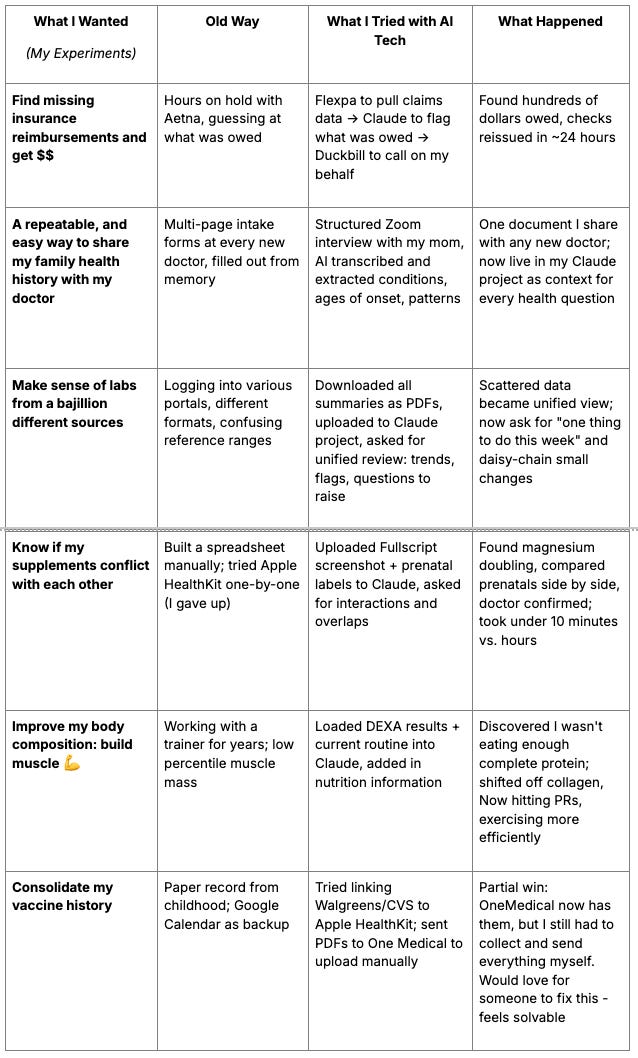

Most of these took less than a day to set up and ran in the background while I was doing other things:

Find missing insurance reimbursements and get $$

Build a reusable family health history from a conversation with my mom

Make sense of labs from a bajillion different sources

Check my supplements for interactions when adding a prenatal

Improve my body composition: build muscle 💪

Attempting to consolidate my vaccine records

Feel free to jump around.

A few things worth naming before you dive in:

On “jigs” versus “tools” (or how-to). Someone in my favorite AI community shared a useful analogy: in woodworking, a “jig” is a personal gadget you create to help you to do your work, but it is never intended for packaging and distribution to others. Right now, a lot of us have a growing toolchain of software jigs that probably couldn’t (& shouldn’t) be shared with others since they’re so custom fit. With that caveat in mind, I’ll share with you some of my ‘jigs’. They aren’t out-of-the-box, product-level ready, but they should help you get started.

AI can only optimize the system it’s given. And there are a lot of broken incentive systems in healthcare. Companies often make more money when you’re sick than when you’re healthy, almost by definition. As one Goldman Sachs analyst memorably asked: is curing people a “sustainable business model?” (No).

The bigger fixes require policy (not prompts). While those progress slowly, I’d rather more people experiment than let others build the future for them.

On when to use AI. A lot of people ask “can I delegate this to AI?” Sure. AI is getting better at tasks all the time. But should you?

If you’ve had the opportunity to work with a stellar executive assistant (EA), you know they could do a larger set of tasks than their agreed upon scope. The relationship takes time. Together, you figure out how to divide the work, what needs the exec’s discernment, what doesn’t.

Before this year, I’ve never had this type of relationship with a non-human intelligence. There are a few quirks that I’m learning about when interacting with AIs, but it’s somewhat similar to how you get to know someone’s personality at work. Except this time, I can also more strongly influence (or program) that personality.

My personal litmus test is ”do I have a motivation gap to work on a task, and how can AI break it down for me to help me get started?”

Momentum begets momentum. Small wins become bigger wins. I’m an efficiency junkie — so it’s fun to check things off. It’s also surprising to me how I feel a strange sense of connection with a non-human intelligence, as we move from one successfully completed experiment to the next.

The following examples are my stories, and not canonical how-tos. With time you’ll develop your own discernment for how you want to conduct your experiments. This is what today’s internet likes to call ‘taste’.

Experiment #1: The Flexpa Insurance Recovery

𝐓𝐋𝐃𝐑: My health insurer owed me hundreds of dollars and never alerted me. I used Flexpa + Anthropic + Duckbill to find it and get checks reissued in ~24 hours. Without these tools, this life admin task would have taken weeks and a lot of Aetna hold-music.

Last month I had a sinking feeling that I haven’t gotten all my reimbursement checks from Aetna over the past 12 months. I called them, sat on hold forever, and got them to void and reissue one check from a claim I knew about. But I had no way to know how many more were missing.

I’m an angel investor in Flexpa, which got me early access to their app. I downloaded my entire medical record (a huge JSON file that would have taken me forever to review), ran it through Claude, and found a bunch of claims flagged “owed to patient.” Hundreds of dollars I suspected were out there and couldn’t confirm until now. Plus some denied claims I’d always had questions about.

Then I built a DIY medical billing advocate. A “medical defender” if you will:

Step 1: Flexpa to pull my records

Step 2: Claude to analyze claims, flag what’s owed, and draft scripts/emails

Step 3: Duckbill to call Aetna for me (h/t Libby Leffler)

After signing some PHI authorization forms, my Duckbill agent got on the phone (a few times) with Aetna, found checks sent to an old address, and had them voided and reissued. They also explained why certain claims were denied and how to fix them by submitting new info, plus which payments went to providers instead of me so I know who to follow up with.

I did all of this in ~24 hours between other tasks. The AI-tasks flowed in the background, which was the first time I clearly felt the human-as-conductor experience. It’s cool that this AI workflow saved me days/weeks, frustration, and $$. (h/t Tim Schneider from BHI who inspired me to write this experience up publicly.)

I asked Flexpa’s CEO Andrew Arruda if I could share the link, and apparently anyone can now pull their own data (yay): my.flexpa.com

A few tips for exploring your insurance data:

First, review denied claims. Check if the same provider got approved for the same procedure before. If so, ask your AI to draft an email: “You approved this in the past. What’s missing now?”

Look for inconsistent reimbursements. My acupuncture visits were reimbursed at different rates for the same procedure. I had my AI prep scripts for the Duckbill agent to ask about it.

Give yourself time to poke around. The first time I looked at my claims data, I didn’t know what I was looking for. Patterns surfaced anyway.

Find a community. Pick 2–5 people who like tinkering and host a casual Show and Tell. It can be easier to grasp what’s possible when you see someone else do it.

Let’s zoom out for a moment to the real issue: AI won’t fix a system with dark patterns. Why is Aetna still mailing checks in 2025? Why are there no alerts when checks go uncashed? The better solution for me isn’t improved AI workflow for checks. It’s offering direct deposit for out-of-network reimbursements. I’m looking at you, Aetna.

So your insurer knows they owe you money and they just… don’t tell you. The good news: your medical records know too, and now your AI tools can surface what insurers won’t.

This first experiment unlocked a fire in me about the “personal health records 2.0” era. Let’s try a few more.

If you’d like a sample “jig” (prompt) to work on this problem yourself, you can find that here.

Experiment #2: Mom’s Oral History + Impact on Exercise Routines

I often dreaded going to a new doctor. Each clinic asks for different intake forms, and somehow I end up filling out the same 12-page packet twice (once online, and again on a clipboard in the waiting room). I’ve always wanted a “common app” for family health history.

Turns out LLMs are good at restructuring data if you have the ground truth. So, I scheduled a one-hour Zoom interview with my mom about our family health history, and ran the transcript through Claude to extract conditions, ages of onset, which relatives, and patterns to watch for. Now I have a document I can remix for any new doctor, and I also shared it with my sisters and extended family.

Even better: I use this document as context in my Claude project. A Claude “project” lets me add my own files and data, and the model references them every time I ask a question.

Two examples of helpful content from my mom: My grandmother died two months short of her 85th birthday from a heart attack. Her identical twin died at 60, also from a heart attack. As family lore goes, her twin refused to take her blood pressure medication and didn’t exercise. Both twins had the same genetics, and my grandmother got to live 25 years longer.

A second tragic storyline in my family was how Alzheimer’s ravaged my grandfather, one of the first people to work on fiber optics out of MIT Lincoln Lab.

Now when I ask Claude to help with my exercise routine, it reminds me:

“Ruth vs. Lorraine : Both had the same genetics, 25-year lifespan difference. Ruth exercised and took her meds. Be Ruth.”

Or: “Brain protection: builds mitochondria, clears amyloid, protects against the family Alzheimer’s pattern.”

These nudges might not be your cup of tea (you can design your own!). For me they’ve driven more consistency in my workouts than anything since the subtle social pressure of my college sports team. And it feels great.

If you want to try this for yourself, here’s a “jig” to build your own family health history.

Experiment #3: Labs Consolidation

Over the past few years, I’ve gotten labs from a bajillion channels: Labcorp & Quest Labs (ordered by various clinicians), Parsley Health, Function Health, Levels Health, a full body MRI from Ezra, a Coronary Artery Calcium (CAC) scan from LumiHeart, genetic tests from Natera and Orchid Health, DEXA scan for bone density and body composition.

(Why a full body scan? A friend had headaches, and her doctors couldn’t figure it out. She paid out-of-pocket for Prenovo and found a brain tumor. She’s got treatment and is now in remission.)

My lab results sit in various portals, different formats, unclear reference ranges. So I downloaded summaries from each, uploaded them to a Claude project, and asked for my trends over time, out-of-range metrics flagged, and anything to discuss with my doctor at OneMedical.

Now I ask my Claude project for the “one thing” I can do each week. I’ve been daisy-chaining minor changes like nutrition tweaks and sleep adjustments. Those small wins unlocked bigger ones. I even found the motivation to tackle in physical therapy a running-related IT-band issue I’ve been ignoring for 20 years.

What feels different this time around is that I programmed my Claude project to respond to how my brain works. I like structure, and also novelty. The recommendations match my patterns. I’m hitting PRs in the gym, my sleep and HRV have increased, and I’m spending less total time than before on these tasks. I feel like this experience is a remedy to the attention economy: my progress is no longer measured by “time spent”.

A note before the jig: AI can hallucinate, and none of this replaces a conversation with your doctor. What I’ve found is that staying curious and then confirming with a clinician is a reasonable division of labor. Here’s a “jig” to get started.

Experiment #4: Medication Overlap Check

I order most of my supplements through Fullscript and track everything in a Google Sheet (e.g., supplement, dosage, frequency), which I share with my doctor periodically.

I was embarking on an egg freezing journey and needed to add a prenatal to my routine. I wanted to figure out the right brand (e.g., Ritual, WeNatal, Needed, Modern Fertility, Perelel, others) and make sure nothing in my current supplement stack would interact with it.

Three years ago this was a massive undertaking. Every brand includes different ingredients at various doses. I built a spreadsheet in 2021 that got unwieldy. Then I tried Apple’s HealthKit medication feature, but you have to add each supplement one by one. It was a tedious UX, and I gave up.

(The only person I know who had the fortitude to build this system prior to AI was Natasja Nielsen — here’s her write up. She’s a legend, and now runs the Open Label Research Foundation.)

What I tried this year: I uploaded a screenshot of my Fullscript order and photos of the leading prenatal nutrition labels to Claude, then asked: Are there any interactions? Overlaps? Which prenatal makes sense given what I’m already taking?

Claude flagged that I was doubling up on magnesium, compared the pre-natals side by side, and I sent the output to my doctor. She confirmed it looked good. Total time: under 10 minutes. I’m now taking fewer total supplements and spending less money.

One observation on incentives: this would be a hard feature for a Fullscript product manager to advocate to build. Their business benefits when I buy more, not fewer. An AI that advocates on my behalf rather than the marketplace changes that dynamic entirely.

Experiment #5: Building Muscle (Finally!)

Earlier this year, I booked a DEXA scan to check bone density and body composition, which was partly triaged from my Zoom interview with my mom (Experiment #2, family history of osteopenia). Bone density came back fine. But my muscle mass percentile was shockingly low.

I felt frustrated — how could that be? I’d worked out with a trainer for years.

I loaded the results into Claude and walked through my routine. The underlying issue wasn’t how much I was exercising, it was what I was (not) eating. I wasn’t getting enough grams of protein. And a lot of what I thought was protein was collagen-based (bone broth, Vital Proteins powder). Collagen is an incomplete protein that doesn’t contribute much to muscle growth.

So I finally learned about macros. Shifted to ‘complete’ proteins (whey, chicken, tofu) and cut back on collagen-based ones, and also started eating more. I’m hitting PRs now, exercising more consistently, in less total time.

The AI caught a marketing fallacy: collagen protein ≠ muscle-building protein. It’s unlikely I would have hit my muscle targets with my previous diet no matter how much I worked out.

This is similar to the jig #3. One thing I’d recommend: In Claude’s “personal preferences” ask it to “default to scientific evidence” and “ask clarifying questions if unsure.” It applies to every conversation and makes the outputs noticeably more useful for me.

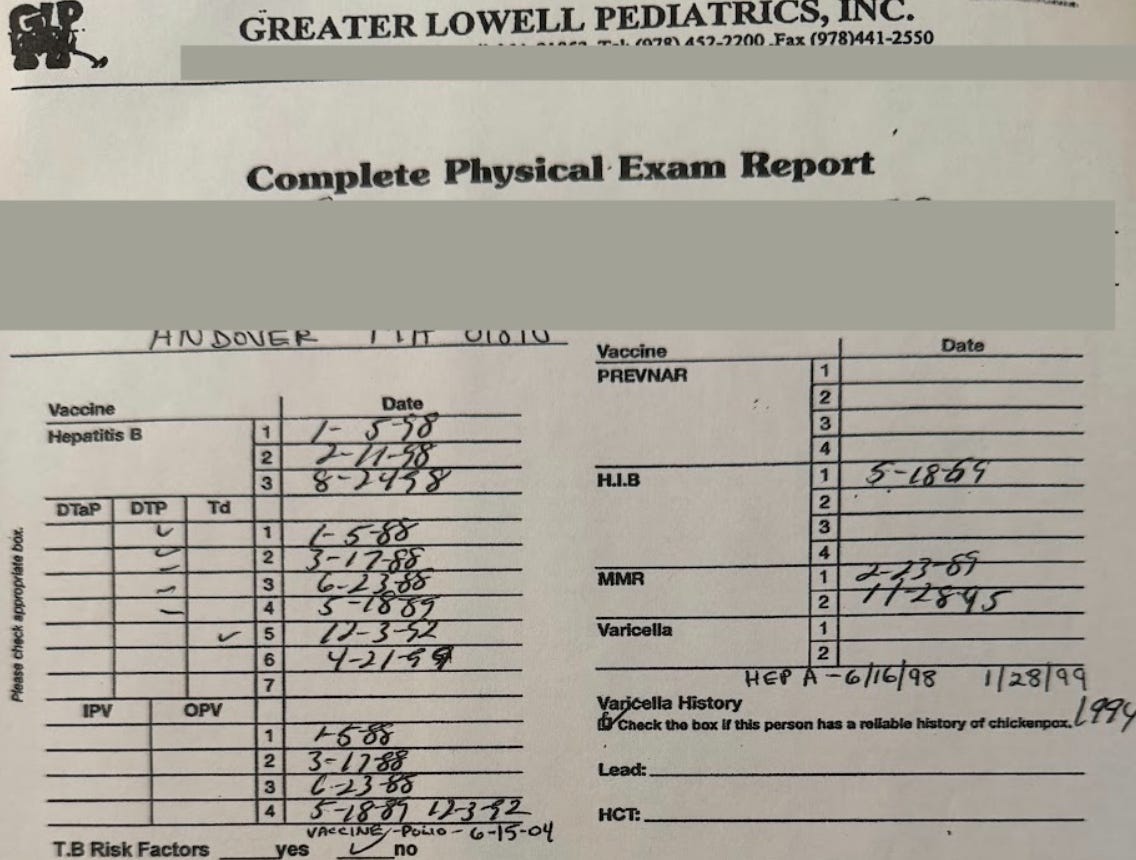

Experiment #6: The Vaccine Paper Trail (Failed Attempt)

Did you have one of these growing up?

My vaccine record was nearly a sacred document. My mom and doctor updated it every year, and I carried it to school in a little plastic folder so it wouldn’t get wet.

Fast forward to 2026: I was about to get a flu shot at Walgreens and they asked about my last vaccine. Hm, the only place I could find it was my Google Calendar. I never miss logging health appointments, so I can search “flu” or “TDaP” or “COVID” and see the date and location.

Feeling ambitious after experiments #1–5, I thought: surely in 2026 I can get a digital version of that childhood record. All my vaccine data, searchable, not in Google calendar–perhaps a nicely formatted vaccine record.

I only get vaccines from three places (Walgreens, CVS, and One Medical) and all three let me export PDFs. My issue was combining them. I tried linking Walgreens and CVS to Apple HealthKit. It showed the integration but wouldn’t pull all the data, only COVID-19 records (sigh, which I’m guessing was an artifact from when we had to prove vaccination to get into restaurants).

The one partial workaround: One Medical lets me upload records via their chat concierge. I sent them all my CVS and Walgreens PDFs and they added them to my OM record. Useful, but I still had to collect and send everything myself. Not so different from that plastic folder from childhood. This time, though, I also saved everything in my Claude project, meaning I’m still in that conductor mode.

(I’m sharing this because I’d love for someone to fix this — and perhaps a product person at any of these companies is reading! I’d be happy to road test this workflow with you…)

On keeping humans in the loop

I prefer to keep humans in the loop (Why? So an LLM doesn’t, say, nuke my inbox). Automation is not my end-game objective for this project.

I enjoy being The Conductor: making the judgment calls, observing the flow, catching what the AI misses. My AI system handles the tedious parts. I handle what requires extra context or trust. I’ve also made deliberate tradeoffs on security:

For personal data such as email and calendar, I’m cautious. I generally don’t want any tool that can edit or delete. I strongly prefer AI interactions that are read-only, and provide suggested actions.

For health data, I’m much more permissive than younger me would have expected. My threat model has shifted. I used to worry about someone accessing my health information. Now I worry more about not getting care because the system made it too hard, or dark patterns, or I gave up. The risk of inaction feels higher than the risk of sharing.

As I design my second brain-health system, I want to keep memory, compute, and interfaces separate. This impacts how I think about my data (Flexpa JSON, lab PDFs) + my AI models (Claude, other) + my interfaces (could be Slack, email, browser, terminal). I don’t want to be locked into one system. I can remix each component as needed and as each part of the stack evolves.

Megan Verena Joyce, CEO of Duckbill, made an observation about interfaces I often come back to: “The ideal surface through which people will actually consume AI remains genuinely unsettled — app, API, voice, agent, [or] something we don’t have a name for yet.”

An arms race: what happens as more players use AI?

What if everyone deployed these experiments at scale? Aetna can handle me as an edge case. At scale, the insurer would need to change. And if every provider used AI to upcode, costs would rise. We’d find ourselves in an arms race with no referee.

According to this January’s Garner Health report, the healthcare sector has adopted AI at 2.2x the rate of the broader economy, with its total spend tripling to $1.4B last year. But 89% of that capital is going into back-office tools designed to maximize provider revenue, which fuels exactly that arms race: providers upcoding, payers denying faster, “severity inflation” driving a 1.7% increase in employer healthcare spend.

The full picture of who’s using AI for what:

Providers: upcode (charge more for the same visit)

Payers: deny claims faster

Pharma: optimize pricing

Patients (like me): get through the health admin slog

Everyone is optimizing for their position; few are improving the system. Until we fix the underlying incentives, this is what we get: warring algorithms extracting rents from each other.

Perhaps I’m too cynical, and this is why I hope we can agree as a society that everyone has the right to healthcare — not only the poor via Medicaid, the old via Medicare, or the (non-1099) fully-employed via employer plans. The person delivering your Uber Eats likely falls into none of those categories, and as a result, is far less likely to afford and have health insurance.

Until that changes at a policy level, the best any of us can do is learn to work the system better than it works us.

The Patient as a Creator

A conversation with Thorsten Wirkes at Bertelsmann helped me name why this moment matters: these AI tools let anyone be a creator. Not in the influencer sense, but in a more true-nature human sense.

I’m reminded of Meinrad Craighead’s poem:

Whether we are weaving tissue in the womb or

weaving imagery of the soul — our work is the

work of conception, gestation and birth . . .

the mother has but one law — create,

make as I do . . . transform one substance

into another . . . transmute blood into milk,

clay into vessel

feeling into movement

word into song

egg into child

fiber into cloth

stone into crystal

memory into image and

body into worship

Creation is the human birthright. And AI, at its best, helps us express that.

What’s happening today feels like a bigger-scale version of what Substack did for writers, Shopify for merchants, or Excel for analysts. The barrier to doing something that once required an institution (capital, or a lot of software engineers) dropped low enough that individuals (patients) can do it themselves.

You’re a conductor now. Not because institutions handed you that power, but because the technologies got capable enough that you can take it. Put that jetpack on.

Thanks to: Thorsten Wirkes, Tim Schneider, Ginny Fahs, Julia Chou, Reshma Khilnani, John Wilbanks, Andrew Arruda, Rachel Katz, Vince Hartmann, Caroline Reiner, Christina Bognet, and John Weldon and many others for conversations that shaped this piece.

Afterward

Getting unstuck: learning to retire my old mental models

A few years ago, I wrote a piece on how software was “eating” clinical trials. Fragments from that work turned into a company called HumanFirst, which we sold to ICON (NASDAQ: ICLR) in 2024.

After the sale, I took a sabbatical and tried to apply similar frameworks (“Jobs to be Done,” market maps) to understand how AI is changing care delivery. None of my drafts worked. Eventually I realized why: “Jobs to be Done” puts the doctor at the center of things with the linear flow I kept mapping (i.e. onboarding > check-in > visit > orders > billing). But patients don’t experience healthcare that way. We have multiple doctors, overlapping needs, things happening all at once. A linear framework (even a patient-friendlier one) wasn’t going to be the answer.

Similar problem with how I was approaching personal health records (PHRs). I kept thinking of them as apps. But an app is a company-level view and reflects a product built for patients, not by them. My old frameworks and mental models were breaking in the age of AI. That felt disconcerting, and also freeing.

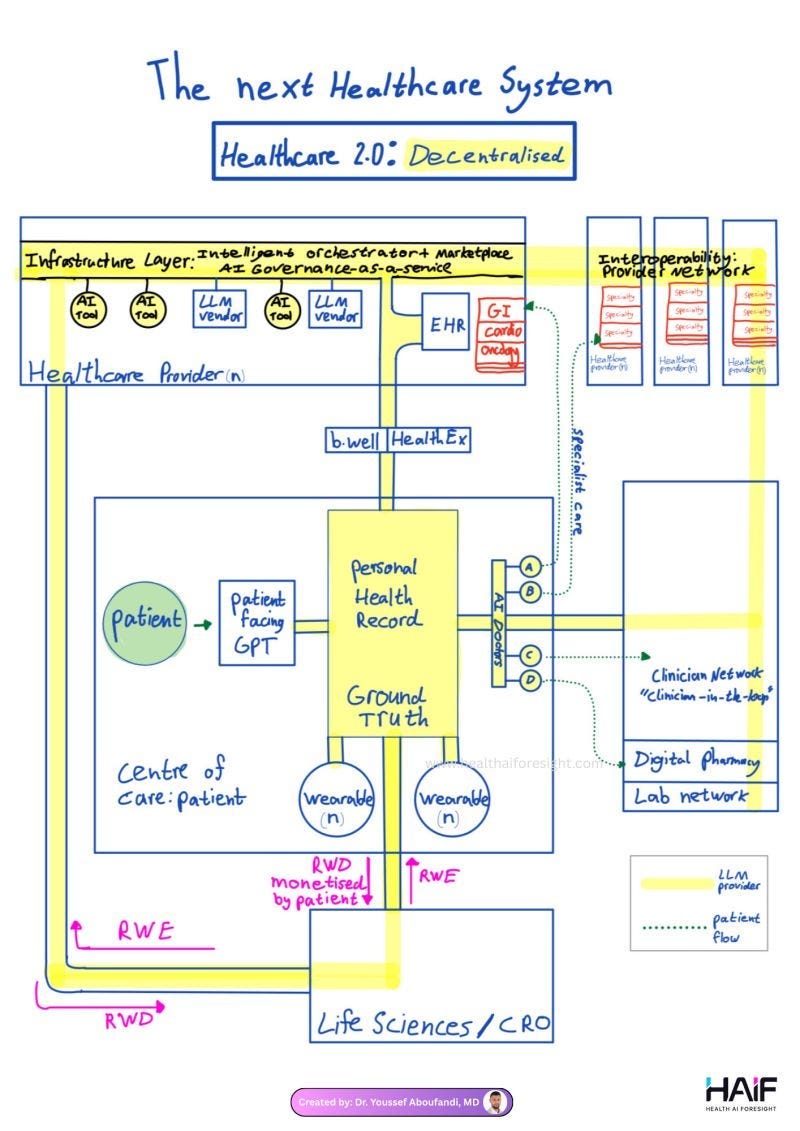

The Flexpa experience was my big ah-ha. Decentralization isn’t inherently good or bad — it’s more of the current, unchangeable state of things. Years of interoperability policy and “one place for everything” efforts have been well-intentioned and largely haven’t worked for the patient experience. My HealthKit is separate from my labs, claims, supplement list and MRIs. But in 2026 that turns out not to matter as much as I thought. LLMs can synthesize across siloed sources.

All these years, I’d been thinking about decentralization wrong. It’s less an engineering problem and more a governance one. Flexpa, Claude and Duckbill act as a coordination layer. If it works well, clinicians move up the stack into oversight and discernment, and stop being some of the most expensive data entry workers on earth (h/t to Dr. Youssef Aboufandi for that framing). This picture unlocked these concepts for me:

There’s more shared responsibility. The data and needs are compiled by the patient, and then confirmed by the doctor, rather than the other way around (as it’s traditionally been).

I also like that my new system removes emotion from transactions that are usually loaded with it. My insurer doesn’t have to deal with a confused, frustrated patient on hold. They get a clear, properly formatted adjudication letter and some scripts from a dispassionate Duckbill human calling on my behalf. That turns out to be useful for everyone involved (I hope!).

As Flexpa CEO Andrew Arruda put it: “Your health data shouldn’t live in an app. It should sit with you and plug into whatever you want.” It took me a while to understand what that meant in practice, but now I get it.

A ‘second brain’ for health (and why it only works in the past few months)

When I set out on this journey, I didn’t intend to build a next-gen personal health record. It organically emerged from the experiments. Each time I added a new data source (claims, labs, family history, supplements) the Claude project got a little more useful. After a few months, I had something that could not merely store information, but advocate on my behalf.

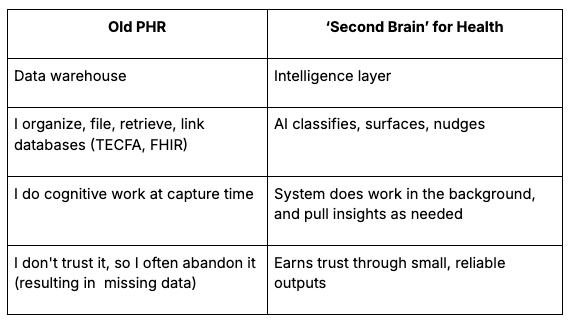

A hot concept these days is the “second brain,” a personal knowledge management system that offloads memory and surfaces things when you need them. Popularized by Tiago Forte and expanded on recently by Nate B. Jones, it acknowledges a key insight: storage systems fail because they ask you to do cognitive work at the wrong moment, right when you just want to capture something and move on. Old-school patient portals had these problems, and then some.

Here’s some differences between the old approach and the “second brain” I’ve been building:

In practice, my new system is simple: I dump everything into one project. I don’t build folder structures or differentiate between a bill, lab result, or post-appointment voice memo. My AI models do the routing, and I ask for specific outputs, not open-ended reviews. (Think: less “analyze my claims,” more “what does Aetna owe me and what’s the script to get it?”)

Reflections from founders

To prepare this piece, a few conversations have lived in my head rent free.

The Abridge View: The Conversation Is the Unit

From a conversation with John Wilbanks (formerly of Astera) and Julia Chou (COO of Abridge):

“The atomic unit of the patient experience is the conversation: patient and clinician. That conversation gets transformed into downstream artifacts: chart notes, care plans, referrals.”

Scribes used to be passive note-takers. Now they’re verifying chronic conditions, checking prior authorization, and supporting billing. The conversation contains more context than the note. Scribes are building an intelligence layer that captures what the EHR can’t.

Wilbanks asked a question I keep thinking about: “How do you get from ‘bullshit’ to ‘holy shit’? How do you build toward a trusted conversational relationship?”

The Abstractive View: Patient Intelligence

From a whiteboard session with Vince Hartmann and Caroline Reiner at Abstractive Health:

EHRs captured the record, and often lost the context. Scribes can capture more context but it’s often locked in transcripts. The next layer, what Vince calls Patient Intelligence, stitches together encounters, claims, pharmacy, devices, and social context into one view. No one does this well (yet). That’s what I’ve been doing manually with my second brain and the experiments above, and the team at Abstractive does even better at an enterprise-level.

The Medplum View: Headless EHRs and Patient-Built Stacks

In a conversation with Reshma Khilnani, CEO of Medplum (note: I’m an advisor), I noticed that what she’s building as an open-source company and what I’ve been building as a patient follow similar logic, just from opposite directions.

Companies like Medplum, Welkin and Healthie are building “headless EHRs,” which separate the data layer from the interface layer, so clinics have more control over patient experience without being locked into Epic, which holds records for roughly 300 million Americans and bundles everything together: database, workflows, billing, scheduling, patient portal (MyChart).

My stack follows the same architecture without the name: Flexpa for data/ PDFs, Claude for compute, and then whatever interface I need that day. The Epic monolith is getting decomposed from both ends. No one can compete with it head-on, but lots of people are building around it: patients building their own stacks, clinics choosing flexible infrastructure.

How those two movements meet is one of the more interesting questions in health tech right now in my view.

this was a fascinating read — thank you for sharing! I love the analogy of being the human conductor…like a conductor, it’s helpful to know how each instrument should be played, without needing to be deeply proficient in it